How can you remotely detect changes in data quality? While sensor networks are increasingly common, keeping control of large scale network instruments to produce the best possible data on a 24/7 basis can be a daunting task when providing very high quality (part per billion) measurements. The field sensors are only part of a robust operating system, particularly when limited maintenance is planned for the network over a campaign.

Hierarchical Network Analysis: Operating long term, automated, multi-tier sensor networks.

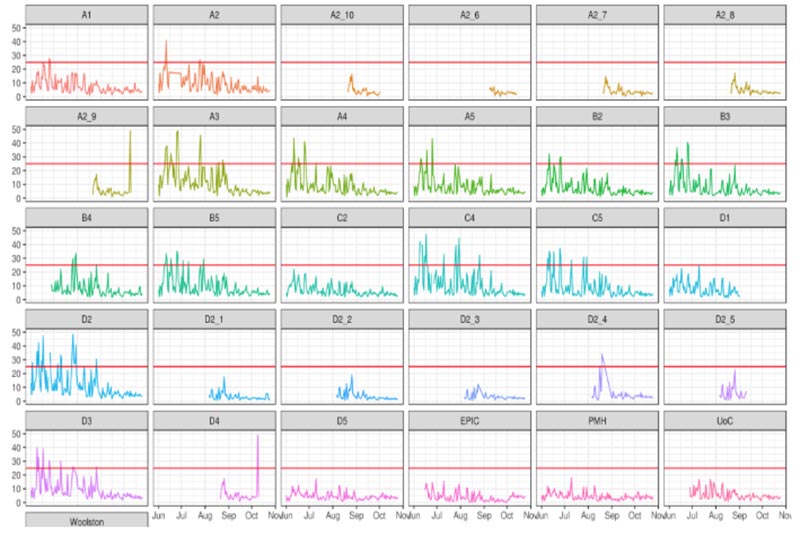

The analysis of one automated operational test for “not instrument-related” data faults for a 30 node remote sensor network over a 12 month period. These systems alert operators, allowing them to investigate, resolve and improve data performance on an enterprise scale.

Mote has invested heavily in building automated operating systems that continuous undertake a variety of advanced mechanistic tests and mathematical analyses on all instrument feeds. Maximising valid data delivery, rationalizing anomalies, and minimising “cost of service” are key objectives.

Predicting issues in advance to enable pro-active servicing and maintenance is a key focus. For example, advanced statistical tools such as Kolmogorov–Smirnov tests are routinely used to identify whether an instrument is deviating abnormally from expectation (eg. due to non-instrumental, environmental effects).

G. Miskell, Reliable data from low-cost sensor networks. PhD Thesis, University of Auckland, December 2017.